Packet 04

Tutorial: Theory of Matrices in 2D and 3D

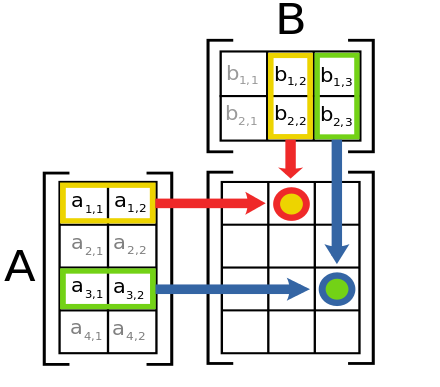

Matrix multiplication

Suppose we have a matrix and a vector that acts upon. We have written “” for the output vector given by letting act on .

Matrices act by sending vectors to other vectors. It is useful to consider another matrix that acts upon the output vector. For example, if , and if is a matrix that acts upon , we can write . (The colon in means “ is defined as .”)

Suppose we define , so that . Then we say that is the output of under a composition of actions of the matrices and . It turns out that if we start with two matrices and , the composition action (letting the matrices act consecutively) can itself be represented by a single matrix. (The composition of linear transformations is a linear transformation.) Let us find this matrix in the case when and are matrices.

Suppose the entries of and are given by

Now compute the action of followed by the action of on the vector :

Therefore, we define the product matrix by this formula:

With this definition and the above reasoning, we have that . (Because of this equality, we can omit the parentheses and write simply , since the result is the same whether you act first by and then by or instead you first multiply the matrices and then act by .)

Just as for the formula for a matrix acting on a vector, we have multiple ways to interpret the formula for the matrix .

- Each entry of the product matrix is given by taking the dot product of a row of with a column of .

- Each column of the product matrix is given by letting act upon the corresponding column vector of . In other words, . (Recall that the action of upon has two interpretations of its own!)

- Each row of the product matrix is given by letting the entries in the corresponding row vector of provide the coefficients for a linear combination of the rows of . The first of these interpretations is best for doing calculations, and the second is best for understanding abstract theory.

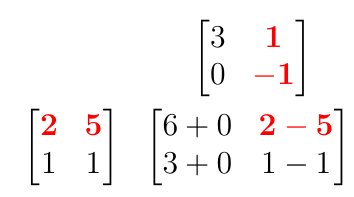

Bottom-Right Method for computing :

E.g.:

E.g.:

Max Born, Nobel Prize lecture:

Question 04-01

Some matrix products

Compute the following matrix products by hand:

- (a)

- (b)

In both (a) and (b) there are three matrices: two are given and their product is the third. In (a), what size vectors do these three matrices act upon? (How many components?) In (b), what do you notice about the nature of the two matrices and their resulting product? Can you guess a rule that generalizes this fact?

Question 04-02

Double rotation

Show that , in other words two rotations by is equivalent to one rotation by .

Exercise 04-01

Nilpotent matrix

Define the matrix :

For , calculate the powers , , , , .

(What special property does have? A matrix with that property is said to be nilpotent.)

Identity matrix Notice that the diagonal matrix with ’s on the diagonal acts upon another vector or matrix by doing nothing:

This matrix is called the identity matrix, since it preserves the identity of whatever it acts upon. It is frequently written “”, regardless of the size of the matrix. So for any .

Sometimes is written more specifically as , where is the number of rows or columns. (Identity matrices always have the same number of rows and columns.)

Exercise 04-02

Reflections square to identity

Show that for any with .

This means that reflecting twice across the same line gives back the original vector. (In 2D the hyperplane of reflection is just a line.)

Matrices acting on matrices

It is useful to think of matrices as acting upon other matrices by multiplying on the left. The effect is the same as the effect of acting on a vector, but it performs this (same) action on each column vector separately. The action is therefore the same across any given row.

Diagonal matrices When two diagonal matrices are multiplied, the result is a diagonal matrix. The diagonal entries of the product are simply the products of the diagonal entries:

A consequence is that diagonal matrices commute with each other: when are both diagonal. (As seen with 3D rotations, frequently this is not true for matrix multiplication.)

Row-scale matrices The diagonal matrices

have the effect of scaling rows (respectively) by the number , without changing other rows, when they are multiplied on a vector or a matrix on the left. These matrices are called row-scale matrices.

Permutation matrices When two permutation matrices are multiplied, the result is a permutation matrix. For example:

Question 04-03

Permutation matrices

Explain why the product of two permutation matrices is always a permutation matrix. (Recall the definition: a matrix is a permutation matrix when each row and each column contains exactly one and the other entries are .)

Row-swap matrices A permutation matrix acts upon another matrix (multiplying on the left) by permuting the rows of the matrix, in the same way that it would permute the rows of a vector:

The most important permutation type is one which simply swaps two rows and leaves the others unchanged. This type of matrix is called a row-swap matrix.

The row-swap action is performed by a permutation matrix which has ’s on the diagonal except for two rows. In these two rows, the ones from a diagonal matrix are swapped with each other.

Example

Row-swap example

The matrix which swaps rows and (of a vector or another matrix) works like this:

To obtain this row-swap matrix, start with the diagonal matrix with ’s along the diagonal, and then swap rows and .

Row-add matrices A matrix that has ’s on the diagonal and the other entries ’s, except for a single additional entry , is called an elementary shear matrix, or a row-add matrix:

If the entry is in the row and column, this matrix acts by taking the row (of a matrix or vector that it’s acting upon) and adding it into the row with a coefficient:

Remember that when a matrix is multiplied on the left on a vector or a matrix, the top row of the matrix controls the top row of the output.

Matrix algebra

Matrix addition and scaling Matrices can be added, subtracted, and multiplied by scalers, just like vectors. These operations are done componentwise:

In terms of the actions performed by these matrices, addition is like addition of functions: the action of a sum of matrices is to give the sum of the outputs under the actions of the separate matrices. For functions, this would be written . For matrices, we write , and similarly for subtraction, scalar multiples, and other variations on basic algebra operations.

Matrix distributivity It is important to notice that matrix multiplication is compatible with matrix addition in the usual sense of distributivity:

One way to prove this formula is to show that it is true after both sides are applied to an arbitrary vector . (To complete the proof, let take the specific value of each standard basis vector in turn, and you find that column of the matrices on each side must agree.) To show the equality after multiplying both sides on an arbitrary , we need to check distributivity of matrix actions on vectors in general:

Question 04-04

Linearity / Distributivity of matrix actions

Show that whenever a matrix acts on vectors and .

Hint: first try this with a matrix and 3D vector with arbitrary entries. Then to show it in general, use the “summation notation” for the matrix action on a vector:

Matrix division For real numbers, division and multiplication are inverse processes, meaning that division “undoes” the action of multiplication, and vice versa.

Any number can divide another number provided it is not zero. For matrices, the situation is more complex, because “being zero” is more complex. Many matrices have non-zero entries, yet they “act as zero” upon certain other vectors. When this happens, the action cannot be “undone”, because you cannot divide by the zero action performed on those vectors.

Example

Projection is not invertible

Consider the projection matrix which sends a vector to the vector . This matrix acts by zero upon any vector , sending to . This action cannot be undone! (What specific vector would the inverse action send to?)

So, some matrices can be divisors. These are called invertible matrices. Others cannot, and these are called non-invertible matrices. A matrix is invertible when the inverse matrix exists.

When it exists, an inverse matrix acts by undoing multiplication by . Concretely, this means:

Notice in the second formula that the action of can be undone before even acts. (Ordinary division also works like this!) Another way to look at it is that acts by undoing the effect of . (Ordinary division: multiplication by undoes the effect of multiplying by .)

Recall the “identity matrix” which satisfies for any . So we can write the above formula using matrices:

Notice that we have to write both formulas because matrix multiplication is not commutative! (Ordinary division: and are equivalent because for any numbers.)

Inverse of matrices There is a general formula for the inverse of any matrix that should be memorized:

Exercise 04-03

Checking formula for inverse

Check the formula for for a matrix by computing by hand and . (You should obtain in both cases.)

Which matrices have an inverse?

(Hint: you just checked a formula that works whenever it makes sense, so there is an inverse whenever it makes sense, given by the formula. Suppose are such that the formula does not make sense. Can you find a vector which is sent to by ?)

Exercise 04-04

Inverse of a reflection

Show (in 2D) that the matrix of a reflection is the inverse of itself. Show this first abstractly (using the definition of inverse), and then concretely (using the formulas for reflection and inverse matrices).

Exercise 04-05

Inverse of a rotation

Show (in 2D) that the inverse of the matrix of rotation by is the matrix of rotation by .

The inverse of is useful for finding a vector which is sent to a given vector by the action of a given matrix :

If we know and , and we want , this method will give us .

Example

Inverse matrix to find preimage

Problem: Define

Find a vector with the property that , meaning that sends to the given .

Solution: First we compute using the formula. We have , so . Now compute that , and this is our answer for . Check this answer by computing , as expected.

Exercise 04-06

Inverse matrix to find preimage

Repeat the previous example for this data:

Determinant of matrices The determinant of a matrix , written , is a single number that is associated to the matrix.

For a matrix, the determinant is given by the formula in the divisor of the formula for :

We will learn many things about determinants. The important thing for now is that exists if and only if .

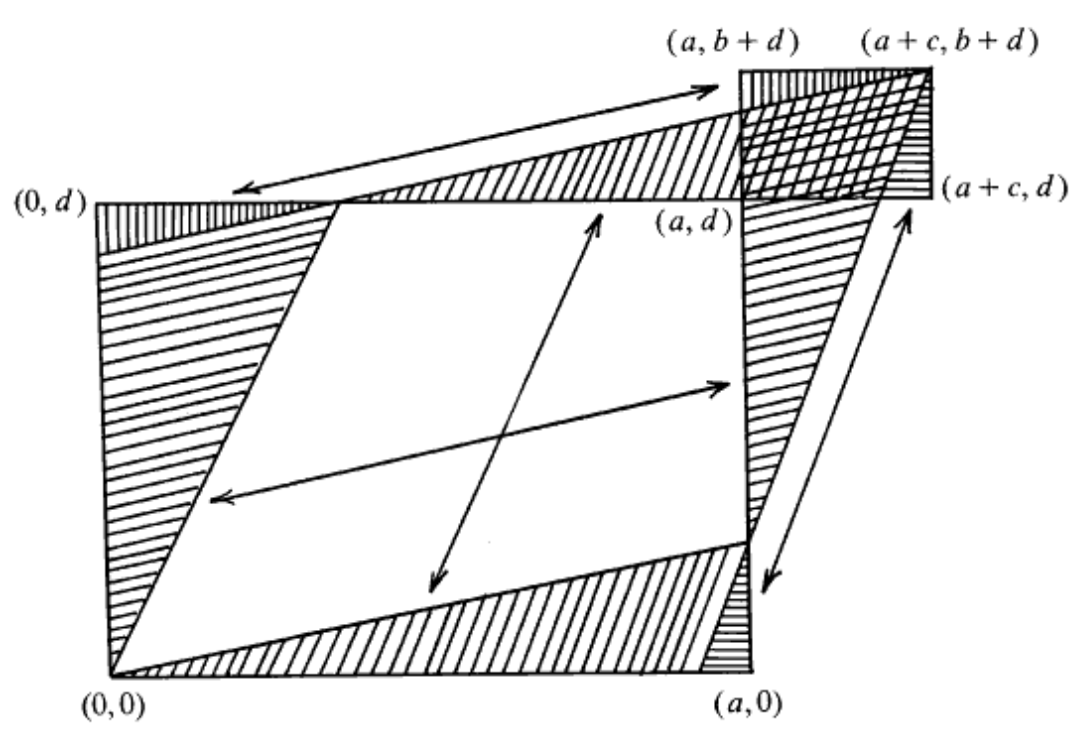

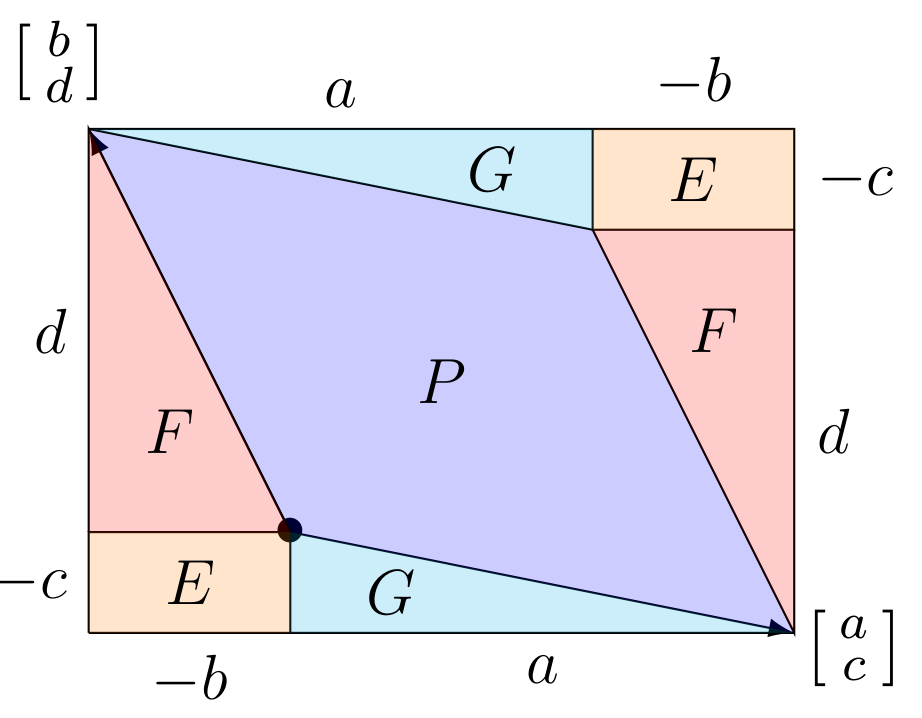

Optional: interpretation of determinant

Just for fun, here is a visual proof (by S. Golomb) that gives the area of the parallelogram spanned by the column vectors of , namely and :

Here is another visual for parallelograms from obtuse vectors:

Here is another visual for parallelograms from obtuse vectors:

Eigenvectors, eigenvalues

We saw that standard basis vectors are eigenvectors for diagonal matrices, and the diagonal entries are the eigenvalues. If has diagonal entries , then .

Now consider the problem of finding eigenvectors and eigenvalues for a non-diagonal matrix . This means finding a vector and a scalar such that .

Suppose we start with a hypothetical . Write for the matrix . So we wish to solve , which is equivalent to . Now write . So we wish to find such that .

Notice that if is invertible, then there is no solution. (The only solution would be and that is not allowed for an eigenvector.) The reason is that if exists, we can let it act upon both sides of the equation to obtain . Therefore, we only have a chance of solving this if is non-invertible. Recall from above that is non-invertible precisely when .

It turns out we can solve the equation if . Let us do this by hand for matrices.

Derivation that if then can be solved with

Let . Assume , which means or or . Write for the common ratio . Thus , and now we wish to solve the equation:

This means and . Dividing the latter by we arrive at the former, so we can take any that satisfy . Let us choose . You can now check directly that it works:

If , there must be a vector such that for some scalar

Proof: Define , and so

Suppose . By the formula for , this means . That is a quadratic equation in and could be rewritten as . By the quadratic formula, the solutions are . Write these two solutions as and .

So we have found and with and . In either case we can solve for a such that and a such that using the method in the box above.

Example

Finding eigenvectors and eigenvalues

Problem: Define . Find the two eigenvalues and some (any) two corresponding eigenvectors of .

Solution: First write . Then means . Solve this quadratic equation to obtain and . We have found the two eigenvalues.

Next, write out the matrices

Our goal is to find and such that . We can guess two solutions: let and let . To check that these are indeed eigenvectors of the original corresponding to and , compute:

You could also use the procedure in the gray box “Derivation.” For we have , and for we have . Then we would get and . Notice that the first eigenvector is a scalar multiple of ours above: .

This is true in general: if , then . In other words, you can scale an eigenvector and the result is still an eigenvector with the same eigenvalue. This is because when is a scalar, and of course when both are scalars. In yet other words, the line spanned by an eigenvector , called an eigenline, is preserved by the matrix: every vector on this line is mapped back to something on this line.

Summary

- Matrices can be multiplied by each other. Express as a row of column vectors; then is the matrix which is the row of column vectors . In other words, is computed by letting act upon the columns of .

- Matrices acting on vectors satisfy , and acting on matrices they satisfy .

- The identity matrix has the property that and for any other matrix .

- Row-scale matrices act on a matrix (or vector) by scaling a chosen row. Row-swap matrices act by swapping two rows. Row-add matrices act by adding one row into another.

- An “invertible” matrix is one having an inverse , which has the property that . (Here is an matrix.) For a matrix, its inverse is given by a formula:

A=\begin{pmatrix} a&b\c&d \end{pmatrix},\quad A^{-1}=\frac{1}{ad-bc}\begin{pmatrix} d&-b\-c&a \end{pmatrix}.

- The inverse matrix $A^{-1}$ can be *used to solve for $\mathbf{u}$* in the equation $A\mathbf{u}=\mathbf{v}$, where $A$ and $\mathbf{v}$ are both given and $\mathbf{u}$ is sought. - The *determinant* $\det A$ for the $2\times 2$ matrix $A$ (as above) is the number $ad-bc$. The matrix $A$ is *invertible if and only if $\det A\neq 0$*. - Given the matrix $A$, an *eigenvector* $\mathbf{v}$ is a vector ($\neq 0$) such that *$A\mathbf{v}=\lambda\mathbf{v}$* for some scalar $\lambda$ called the *eigenvalue* corresponding to $\mathbf{v}$. - We can *find eigenvalues and eigenvectors* by: setting $\det(A-\lambda I_2)=0$ and solving a quadratic equation to find $\lambda_1$, $\lambda_2$, then plugging these in for $\lambda$ and writing $A_{\lambda_1}=A-\lambda_1 I_2$ and $A_{\lambda_1}=A-\lambda_1 I_2$, and then manually solving for vectors $\mathbf{v}_1$ and $\mathbf{v}_2$ satisfying $A_{\lambda_1}\mathbf{v}_1=0$ and $A_{\lambda_2}\mathbf{v}_2=0$. This is possible using the fact that one row of $A_{\lambda_i}$ will be a multiple $x$ times the other row. ## Problems due 14 Feb 2024 by 12:00pm ##### Problem 04-01 > [!question] Basic multiplications; differing sizes > > Define the following matrices: > $$ > A=\begin{pmatrix}1&2\\3&6\\2&1\end{pmatrix},\quad B=\begin{pmatrix}1&-1\\-1&0\end{pmatrix},\quad C=\begin{pmatrix}1&0&1\\1&3&3\end{pmatrix}. > $$ > Compute the matrix products: $AB$, $AC$, $(BC)A$, $B(CA)$. ##### Problem 04-02 > [!question] Row reduction using elementary matrices > > The row-scale, row-add, and row-swap matrices can be applied in a sequence to *manipulate the entries* of another matrix or vector. A very useful goal is to convert a matrix into an *upper-triangular* matrix, which is a matrix that has all entries *below* the main diagonal equal to zero. > > For example, the matrix $\begin{pmatrix}2&-4\\3&-1\end{pmatrix}$ can be manipulated into an upper-triangular matrix by adding $-3/2$ times row $1$ into row $2$, because $(-3/2)\cdot 2=-3$ and $-3+3=0$. This action is performed by the row-add matrix with $-3/2$ in the $2^{\text{nd}}$ row of the $1^{\text{st}}$ column: > $$ > \begin{pmatrix}1&0\\-3/2&1\end{pmatrix}\begin{pmatrix}2&-4\\3&-1\end{pmatrix}=\begin{pmatrix}2&-4\\0&5\end{pmatrix}. > $$ > Now, using a sequence of matrix actions chosen from the types *row-scale* and *row-add*, convert the matrix > $$ > A = \begin{pmatrix}3&2&-2\\15&12&-8\\9&2&-6\end{pmatrix} > $$ > into an upper-triangular matrix. (Hint: first create two zeros in the first column, then create the zero needed in the second column.) > > Now multiply together the manipulation matrices used to perform your actions (multiplied right-to-left in order of the actions so that the actions are composed). > > Finally, show that this product matrix converts the original matrix to an upper-triangular matrix by multiplying on the left. ##### Problem 04-03 > [!question] Matrix inversion to find preimages > > In each case below, show that $\det A\neq 0$, compute the inverse $A^{-1}$, and use it to find the vector $\mathbf{u}$ satisfying the equation: > - (a) $\begin{pmatrix}1&2\\3&5\end{pmatrix}\mathbf{u}=\begin{pmatrix}5\\-2\end{pmatrix}$ > - (b) $\begin{pmatrix}2&5\\-3&-7\end{pmatrix}\mathbf{u}=\begin{pmatrix}1\\8\end{pmatrix}$ ##### Problem 04-04 > [!question] Calculate eigenvalues and eigenvectors for $2\times 2$ matrix > > Imitate the Example to find the eigenvalues and eigenvectors for $A$: > $$ > A=\begin{pmatrix}7&4\\-3&-1\end{pmatrix}. > $$ ##### Problem 04-05 > [!question] Actions on the right: rows v. columns > > In this problem, we consider the action of a matrix upon another matrix by multiplication *on the right*. That is, the action of $B$ upon $A$ by sending $A$ to $AB$. (The effect is very similar to acting on the left, except that the roles of ‘rows’ and ‘columns’ are reversed!) > - (a) Describe the action of a $3\times 3$ matrix $B$ on the right upon a *row vector* $(v_1\;v_2\;v_3)$. (To obtain the formula, take the matrix product treating the row vector as a $1\times 3$ matrix. Now interpret the formula analogously to the first point in the Summary.) > - (b) Describe the action of $B$ multiplying on the right on a *matrix* $A$ in terms of the *row vectors* of $A$. > - (c) What does the matrix $B_3(\lambda)=\begin{pmatrix}1&0&0\\0&1&0\\0&0&\lambda\end{pmatrix}$ do to another $3\times 3$ matrix $A$ when it acts on the right? > - (d) What is the $3\times 3$ matrix that *swaps columns $i$ and $j$* by acting on the right? > - (e) What is the $3\times 3$ matrix that *adds column $j$ into column $i$* by acting on the right? ParseError: Can't use function '$' in math mode at position 22: …inverse matrix $̲A^{-1}$ can be …