Packet 05

Vectors I: Generators

Basics of nD vectors

Vectors in have real number components:

Such vectors are added componentwise, and scalars multiply every component simultaneously. All the abstract operations and properties of vectors apply to vectors in :

- Operations: addition and scalar multiplication,

- Properties: commutativity, associativity, distributivity, zero vector.

There are standard basis vectors:

Decomposition works as in D:

Pairs of vectors in D also have dot products defined by summing component products:

The norm of an D vector is still .

Dot product still has the meaning of “relative alignment between vectors,” and can still be used to determine the angle between vectors using the cosine formula, . However, this angle is considerably less important in D.

Projection in D is very important. It is computed with the same formula:

We also have . Notice that now lives in the hyperplane perpendicular to . Given various and a fixed , the various are all parallel to , but the various are not all parallel to each other.

The Cauchy-Schwartz and Triangle inequalities become more important in D:

The vector formula for a line through in the direction of , namely , still works in D. However, the formula for a plane:

determines a hyperplane in D, meaning an D space inside of . We could write this space in scalar form as

where:

Spans

Linear combinations of vectors in D work just as in D:

A span is a collection of all vectors which could be obtained as linear combinations of certain given vectors. For example, the collection of all possible that can be written as above, for any , in terms of the given at the outset, is called either of:

It is still an important fact that a span passes through the origin . This is because linear combinations do not include a constant term, so the point can always be achieved by setting for all .

Example

Computing a span by hand

Consider the vectors and . Problem: Show that the set of vectors perpendicular to both and is a span.

Solution: Let be an arbitrary vector such that . By writing these dot products using components, we find a system of two equations:

Solve for and in terms of the others:

Now, we can let , , and take any value, and using these equations we can specify and to guarantee that the system of equations is valid, implying that . Conversely, given values of , , and , these equations fully determine the only possible values of and . Therefore, the set of possible is given by varying , , and in the vector:

Observe that this vector can be written as the linear combination , with

So indeed the set of vectors perpendicular to and is the span .

Convex combinations A convex combination of vectors is a linear combination with a certain constraint on the coefficients:

Subspaces

A subspace is any collection of vectors that satisfies the rules of a vector space, meaning the operations and properties. Since the properties automatically hold for vectors from the original space, the key point is that a subspace is a collection of vectors including the origin, all of whose linear combinations are still in the subspace.

Symbolically: if is any subset of vectors containing , and if automatically whenever , then is a subspace.

Question 05-01

Subspaces

Suppose a set satisfies the symbolic hypothesis above:

Show that any linear combination of vectors from must lie in .

Exercise 05-01

Subspaces given by perpendicularity

Let be defined as the set of vectors which are perpendicular to the given set . Show that is a subspace.

To say that is a subspace is to say that is a vector space in its own right, even though vectors in may also live in a bigger space.

Example

Simple subspaces of

Let be the collection of all possible vectors , meaning that the final term is always zero, and the other terms can be anything.

This set is a subspace because it contains , and any linear combination will still have a zero in the final component, so it lies in .

Question 05-02

Iterating from to

How many distinct subspaces of can you describe using the general idea of the previous example?

Spans and subspaces

A span is always a subspace: it contains , and any linear combination of some vectors in a span can be written as a linear combination of the original vectors defining the span (by expanding each vector in terms of the original vectors, and then collecting like terms).

The converse is also true: any subspace is the span of some vectors. This is not so easy to prove! Here is the proof:

Why every subspace is a span

We consider by using the first components, setting the last components to zero. We prove that if we assume every subspace of is a span, then every subspace of must also be a span. (The result will follow because it is clearly true for , and we can use this fact to build up to one dimension at a time.)

So we assume that every subspace of is a span. Now suppose is any subspace. Consider the last components of vectors . If all of these last components are zero, then actually , and by the assumption, it is a span.

Suppose, on the other hand, that at least one vector has a last component . Now we create the subspace defined as

The collection is a subspace of , so it is a span by the assumption, and we can find a generating set of vectors:

Now we propose the following key fact:

To prove this, let be any vector. If , then , so it is in the proposed span above. If , then consider the vector . This vector has zero in the last component. So , which can be expanded as a linear combination of . By vector algebra, , and we can substitute the expansion of to see that is in the proposed span.

The difference between the concept of span and the concept of subspace is a matter of connotation. When working with a ‘span’, we have in mind a collection of vectors that generates the span using linear combinations. When working with a ‘subspace’, we have in mind the abstract rules of vector spaces.

Incidentally, the above proof shows that any subspace of can be written as the span of or fewer vectors.

Dimension

The dimension of a subspace , written , is the smallest possible number of vectors needed to span .

This definition is very intuitive: a space has dimensions if different numbers are needed to locate every item in the space. These numbers are the coefficients of the spanning vectors in a minimal spanning set for the space.

The definition can also be hard to work with in a rigorous way. How could we prove that a given space could not be spanned by an ever smaller number of vectors?

For example, of course should be -dimensional. That is why we have been saying “D.” Of course it is spanned by the standard basis vectors . But how do we know it cannot be spanned by a smaller number?

In the next section the concept of independence will be developed to handle this question more generally. However, it is worthwhile practice with the general theory of spans to demonstrate this fact by learning about Steinitz Exchange:

Showing is -dimensional: Steinitz Exchange Process

Suppose some set with only vectors could span with , so:

This means that . Then it turns out we can “exchange” for one of the :

where the notation means some subset of with only elements, but we don’t care which is which. The reason we can do this is important: since , we can write a linear combination

with at least one . The equation can be solved for using the other vectors in the equation, and therefore every vector in can be expressed as a linear combination of those other vectors in the equation. But that is the meaning of “.”

Now iterate this process: we can exchange for another vector out of :

The reason we can do this is similar, but with a slight twist. As before, write a linear combination:

This time, however, we know that at least one of is nonzero, say , because there is no way to generate using combinations of alone. So we solve for using the other vectors in the equation. This means we can eliminate from the spanning set, provided we add in .

Now iterate the process times, replacing some at each successive stage with a new vector , observing that the linear combination for could not involve nonzeros only on the other .

After iterations, we have . This is impossible! Since , there is no way to get nonzero entries in components higher than .

Problems due Tuesday 20 Feb 2024 by 11:59pm

Problem 05-01

Spans of column vectors

Matrices are matrices, and . Matrix has ones everywhere on and above the main diagonal, down to the row, and zeros elsewhere, including everything after the row. Matrix has ones on the main diagonal only, down to the row, and zeros everywhere else. Show that the span of the column vectors of is the same subspace as the span of the column vectors of . What subspace is it?

(Hint: first try to verify the assertion for small values of and , for example when and , and then and or . Then see if you can generalize your method to all .)

Problem 05-02

Computing dimensions by hand

- Show that the span has dimension as a subspace by using the “exchange” technique.

- Show that the span has dimension as a subspace by using the “exchange” technique.

- Show that the span has dimension as a subspace.

Problem 05-03

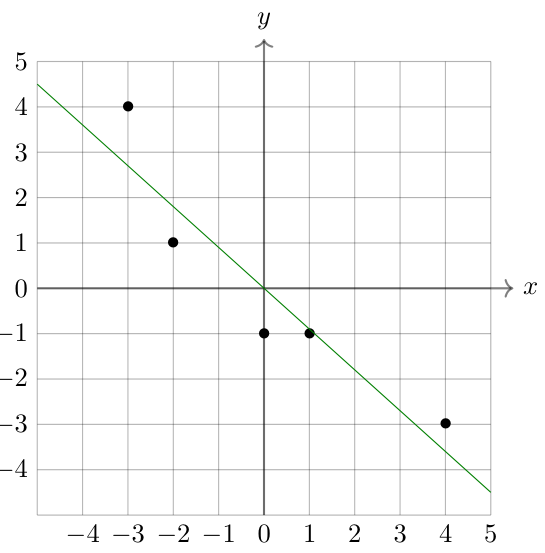

Suppose we are given a collection of data points .

Later in the course, we will learn how to compute the linear regression of this data, namely the line of best fit. In this problem, you will learn about the correlation coefficient of the data: a measure of how linear the data is. There is no point in computing a linear regression if the data is not very linear!

Later in the course, we will learn how to compute the linear regression of this data, namely the line of best fit. In this problem, you will learn about the correlation coefficient of the data: a measure of how linear the data is. There is no point in computing a linear regression if the data is not very linear!

First, let and . Then let be the average of the , and be the average of the . Therefore and have zero average. (By subtracting a scalar from a vector, we really mean to subtract the scalar from each component.) Then define the correlation coefficient:

Correlation coefficient

Problem: Compute the correlation coefficient of the data:

The correlation coefficient is frequently written ‘’. The formula describes as a dot product of unit vectors, so . When , the data are very linear. When , the data lie on a line with positive slope, meaning that if you increase then you expect to increase as well. When , the data lie on a line with negative slope, so if you increase then you expect to decrease.

In a future Packet we will see why measures linearity. For now, consider the following. Suppose the data is linear, so for some and . Then as vectors, we have . If we subtract the average of both sides, we have

Therefore is a scalar multiple of , and the dot product of their unit vectors tells us whether they align or anti-align.

This problem (and its sequel) illustrates the use of D vectors to study data that is presented as D vectors.