Theory 1

Write and .

Observe that the random variables and are “centered at zero,” meaning that .

Covariance

Suppose and are any two random variables on a probability model. The covariance of and measures the typical synchronous deviation of and from their respective means.

Then the defining formula for covariance of and is:

There is also a shorter formula:

To derive the shorter formula, first expand the product and then apply linearity.

Notice that covariance is always symmetric:

The self covariance equals the variance:

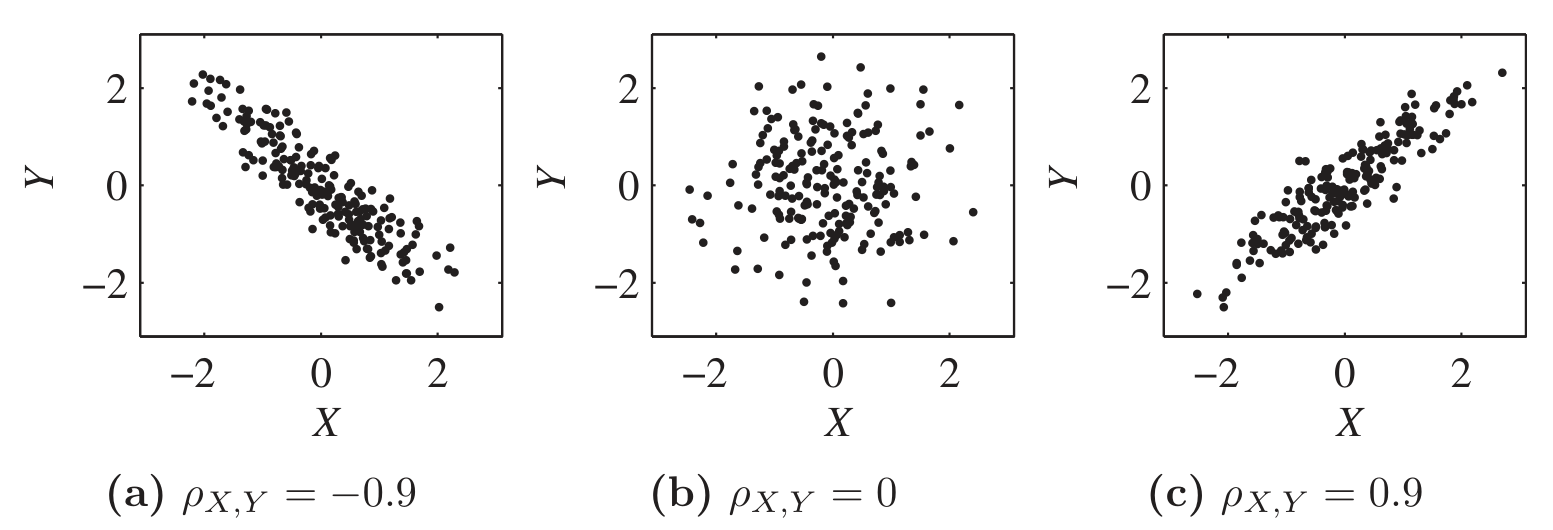

The sign of reveals the correlation type between and :

| Correlation | Sign |

|---|---|

| Positively correlated | |

| Negatively correlated | |

| Uncorrelated |

Correlation coefficient

Suppose and are any two random variables on a probability model.

Their correlation coefficient is a rescaled version of covariance that measures the synchronicity of deviations:

The rescaling ensures:

Covariance depends on the separate variances of and as well as their relationship.

Correlation coefficient, because we have divided out , depends only on their relationship.

Theory 2

Covariance bilinearity

Given any three random variables , , and , we have:

Covariance and correlation: shift and scale

Covariance scales with each input, and ignores shifts:

Whereas shift or scale in correlation only affects the sign:

Extra - Proof of covariance bilinearity

Extra - Proof of covariance shift and scale rule

Independence implies zero covariance

Suppose that and are any two random variables on a probability model.

If and are independent, then:

Proof:

We know both of these:

But , so those terms cancel and .

Sum rule for variance

Suppose that and are any two random variables on a probability space.

Then:

When and are independent:

Extra - Proof: Sum rule for variance

Extra - Proof that

(1) Create standardizations:

Now and satisfy:

Observe that for any . Variance can’t be negative.

(2) Apply the variance sum rule.

Apply to and :

Simplify:

Notice effect of standardization:

Therefore .

(3) Modify and reapply variance sum rule.

Change to :

Simplify: