Bernoulli process

01 Theory - Bernoulli, binomial, geometric, Pascal, uniform

Theory 1 - Bernoulli, binomial, geometric, Pascal, uniform

In a Bernoulli process, an experiment with binary outcomes is repeated; for example flipping a coin repeatedly. Several discrete random variables may be defined in the context of some Bernoulli process.

Notice that the sample space of a Bernoulli process is infinite: an outcome is any sequence of trial outcomes, e.g.

Bernoulli variable

A random variable is a Bernoulli indicator, written , when indicates whether a success event, having probability , took place in trial number of a Bernoulli process.

Bernoulli PMF:

Here .

An RV that always gives either or for every outcome is called an indicator variable.

Binomial variable

A random variable is binomial, written , when counts the number of successes in a Bernoulli process, each having probability , over a specified number of trials.

Binomial PMF:

- For example, if , then gives the odds that success happens exactly 5 times over 10 trials, with probability of success for each trial.

- In terms of the Bernoulli indicators, we have:

- If is the success event, then is the success probability, and is the failure probability.

Geometric variable

A random variable is geometric, written , when counts the discrete wait time in a Bernoulli process until the first success takes place, given that success has probability in each trial.

Geometric PMF:

Here .

- For example, if , then gives the probability of getting: failure on the first trials AND success on the trial.

Pascal variable

A random variable is Pascal, written , when counts the discrete wait time in a Bernoulli process until success happens times, given that success has probability in each trial.

Pascal PMF:

- For example, if , then gives the probability of getting: the success on (precisely) the trial.

- Interpret the formula: ways to arrange successes among ‘prior’ trials, times the probability of exactly successes and failures in one specific sequence.

- The Pascal distribution is also called the negative binomial distribution, e.g. .

Link to originalUniform variable

A discrete random variable is uniform on a finite set , written , when the probability is a fixed constant for outcomes in and zero for outcomes outside .

Discrete uniform PMF:

Continuous uniform PDF:

02 Illustration

Example - Roll die until

Roll die until

Roll a fair die repeatedly. Find the probabilities that:

(a) At most 2 threes occur in the first 5 rolls.

(b) There is no three in the first 4 rolls, using a geometric variable.

Solution

(a)

(1) Label variables and events:

Use a variable to count the number of threes among the first six rolls.

Seek as the answer.

(2) Calculations:

Divide into exclusive events:

(b)

(1) Label variables and events:

Use a variable to give the roll number of the first time a three is rolled.

Seek as the answer.

(2) Compute:

Sum the PMF formula for :

(3) Recall geometric series formula:

For any geometric series:

Therefore:

Link to original

Example - Cubs winning the World Series

Cubs winning the World Series

Suppose the Cubs are playing the Yankees for the World Series. The first team to 4 wins in 7 games wins the series. What is the probability that the Cubs win the series?

Assume that for any given game the probability of the Cubs winning is and losing is .

Solution

Method (a): We solve the problem using a binomial distribution.

(1) Label variables and events:

Use a variable . This counts the number of wins over 7 games. Thus, for example, is the probability that the Cubs win exactly 4 games over 7 played.

Seek as the answer.

(2) Calculate using binomial PMF:

Insert data:

Compute:

Convert :

Method (b): We solve the problem using a Pascal distribution instead.

(1) Label variables and events:

Use a variable . This measures the discrete wait time until the win. Thus, for example, is the probability that the Cubs win their game on game number .

Seek as the answer.

(2) Calculate using Pascal PMF:

Insert data:

Compute:

Convert :

Notice: The calculation seems very different than method (a), right up to the end!

Link to original

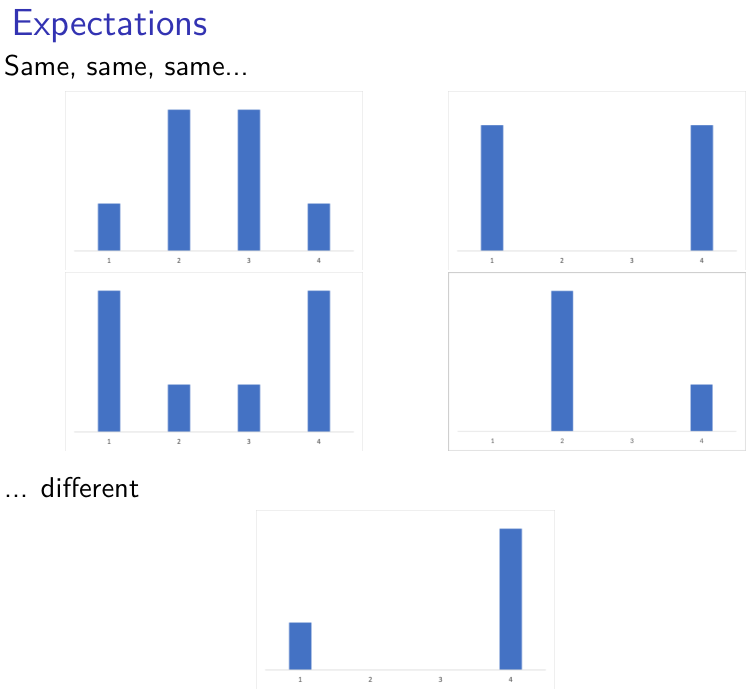

Expectation and variance

03 Theory - Expectation and variance

Theory 1

Expected value

The expected value of random variable is the weighted average of the values of , weighted by the probability of those values.

Discrete formula using PMF:

Continuous formula using PDF:

Notes:

- Expected value is sometimes called expectation, or even just mean, although the latter is best reserved for statistics.

- The Greek letter is also used in contexts where ‘mean’ is used.

Let be a random variable, and write .

Variance

The variance measures the average squared deviation of from . It estimates how concentrated is around .

- Defining formula:

- Shorter formula:

Calculating variance

- Discrete formula using PMF:

- Continuous formula using PDF:

Link to originalStandard deviation

The quantity is called the standard deviation of .

04 Illustration

Example - Tokens in bins

Gambling game - tokens in bins

Consider a game like this: a coin is flipped; if then draw a token from Bin 1, if then from Bin 2.

- Bin 1 contents: 1 token $1,000, and 9 tokens $1

- Bin 2 contents: 5 tokens $50, and 5 tokens $1

It costs $50 to enter the game. Should you play it? (A lot of times?) How much would you pay to play?

Solution

(1) Setup:

Let be a random variable measuring your winnings in the game.

The possible values of are 1, 50, and 1000.

(2) Find the PDF of :

For have

For have

For have

These add to 1, and for all other .

(3) Find using the discrete formula:

Since , if you play it a lot at $50 you will generally make money.

Challenge Q: If you start with $200 and keep playing to infinity, how likely is it that you go broke?

Link to original

Example - Expected value: rolling dice

Expected value: rolling dice

Let be a random variable counting the number of dots given by rolling a single die.

Then:

Let be an RV that counts the dots on a roll of two dice.

The PMF of :

Then:

Notice that .

In general, .

Let be a green die and a red die.

From the earlier calculation, and .

Since , we derive by simple addition!

Link to original

Example - Expected value by finding new PMF

Expected value by finding new PMF

Let have distribution given by this PMF:

Find .

Solution

(1) Compute the PMF of :

PMF arranged by possible value:

(2) Calculate the expectation:

Using formula for discrete PMF:

Link to original

Exercise - Variance using simplified formula

Variance for composite using PMF and simpler formula

Suppose has this PMF:

1 2 3 Find using the formula with .

(Hint: you should find and along the way.)

Link to original